Product Privacy Policies

Product Privacy Policy — Verve Group, Inc. and VGI CTV, Inc.

Product Privacy Policy — Verve Group Europe GmbH

Product Privacy Policy — Platform161 B.V.

Advertising and Data Services Privacy Policy

Verve Group, Inc. and VGI CTV, Inc. (together “Verve”) are committed to protecting consumer privacy and to clearly informing users about the collection and use of information.

This Advertising and Data Services Privacy Policy (“Policy”) describes how Verve processes personal information when providing advertising, measurement, and related services to our clients (“Services”). This Policy also describes your choices over such processing. This Policy does not describe how we process personal information relating to our website. For information on how we process personal information relating to our website please read our Website Privacy Policy.

“Personal Information” is information that identifies you or your household or can be reasonably linked directly or indirectly with you or your household. This Policy does not apply to anonymous, de-identified, or aggregate personal information as defined under applicable law.

Collecting Personal Information

We collect Personal Information when you knowingly and voluntarily provide it to us, such as when you provide us feedback or request information from us. This Personal Information will only be processed in connection with your feedback or request.

Verve may identify your device and collect Personal Information using an operating system supplied ID such as Apple’s Advertising Identifier, Google’s Advertising Identifier, or a randomly assigned unique identifier (“Device Identifier”). The advertisements delivered by Verve may include forms or other prompts that allow you to opt-in to share data.

We do not knowingly collect Personal Information from any child under 13 years of age. If we are informed or become aware that our Services are being used on a site or app directed at children, we will restrict the use of ad serving information to uses permitted under the Children’s Online Privacy Protection Act of 1998.

a) Location Data

In order to make advertising messages as relevant as possible, precise location data associated with Device Identifiers may be used for targeting ads and as input into location targeting segments based on the characteristics of locations. Location data is also used to count impressions, clicks, and other metrics to model household locations for the purposes of demonstrating marketing impact of advertiser campaigns. Location data that you provide to an app or web site may be provided to Verve for ad serving or reporting purposes. In addition, supplemental information may also be used such as a device’s speed of movement and positioning, proximity of nearby devices, or similar information available from an operating system.

You can opt-out of the use of precise data for location-based tracking by using the settings available on your mobile device. Ads will continue to be selected for you based on more general information, such as location that can be inferred from Internet Protocol (IP) addresses or other data.

b) Cookies

We collect information automatically when you use our Services through mobile websites or HTML by using log files and alpha-numeric identifiers known as “cookies” that are usually stored with the device’s web browser. This includes your IP address, browser type, browser language, device type and version, the date and time of your request, amount of data transferred, the source of your request (page referrer), and the nature of the content delivered to you. We generally use cookies to help with customer tracking. We may use both session cookies (which expire once you close your web browser) and/or persistent cookies (which stay on your device until you delete them) to provide you with a more personal and interactive experience. Persistent cookies can be removed or blocked by following the directions in your internet browser. We also use log files and cookies to improve the quality of Services and pages you have visited.

In addition to the Device Identifiers described above, Verve will collect information about the type of app you are using and details such as IP address, device type, carrier, and operating system. Verve utilizes this information to uniquely identify mobile devices to deliver targeted marketing messages to consumers. We believe that the presentation of relevant marketing messages assists in providing a more meaningful mobile web experience. For example, this technology enables Verve to limit the number of times a specific message is presented to a consumer (frequency capping), to support product category preferential marketing and to deliver advertising based on your location.

c) Web Beacons

We may permit publishers and advertisers to use a software technology called pixels (a.k.a., web beacons), which are tiny graphics with a unique identifier, similar in function to cookies, used to report non-personal individual or aggregate information and help us better manage content on our Services. In addition, we may use pixels in our advertisements to let us know which ads have been viewed or to help tailor advertising.

d) Information from Third Parties

Our business partners may also collect the Personal Information from you using a variety of technologies, including cookies, software development kits, and other digital trackers deployed on websites, mobile applications, or other digital services. They may share this information about your devices with us. We may add this to the information we obtain from your use of Services. You may be able to modify or disable the collection or sharing of Personal Information in your device settings.

Using Personal Information — Your Choices

Verve uses Personal Information to provide Services to our business partners. They are used to help tailor which ads are displayed to you as you visit web sites or apps using our Services. Information that Verve collects as you visit a web site or app will be used to create an ad serving profile used to determine the ads displayed and to report about the effectiveness of that advertising. Personal Information collected by Verve may be linked with other available data about you from our business partners, including linking the various devices you may use. Where permitted by law, we use the information we collect to improve our products and services.

Your Personal Information may be processed by Verve in the country where it was collected as well as other countries (including the United States) where laws regarding processing of Personal Information is less stringent than the laws in your country.

To opt-out of the use of Personal Information to tailor the ads you are shown, you can use the “Limit Ad Tracking” settings on your device, more information here. You will continue to see ads, but they will not be selected based on your activity across unrelated web sites or apps. Some of our Services which use cookies offer an opt-out cookie. These opt-out cookies allow you to decline ad targeting on the websites you visit. This option can be exercised here.

Sensitive Information

When we create profiles to serve ads based on your activity across different web sites or apps, we restrict the use of advertising categories that we deem sensitive based on local law, applicable industry codes, and our own internal standards.

Retention

We retain Personal Information for as long as needed to support our business needs for ad-delivery, reporting and auditing, but for no longer than two years, after which such data is deleted or minimized by having unique user level identifiers removed.

Disclosure of Personal Information

Verve may disclose certain Personal Information:

- To service providers who help us provide the Services, in which case we will take reasonable steps to ensure that these parties comply with confidentiality obligations and contractually require these parties only use the information as needed to provide services to us;

- To third parties that you ask or to whom you have authorized us to send Personal Information;

- To any subsidiary organizations, joint ventures, or other organizations under our control or who are under common control with us (collectively, “Affiliates”), in which case we will require our Affiliates to honor this Privacy Policy;

- If we believe in good faith that such disclosure is necessary to (a) resolve disputes or investigate problems (b) comply with relevant laws or to respond to lawful requests from law enforcement or other public authorities; or (c) protect and defend our rights or property or the rights and property of you or third parties; or

- In connection with or during negotiation of any merger, financing, acquisition or dissolution, transaction, or proceeding involving sale, transfer, divestiture, or disclosure of all or a portion of our business or assets. In the event of an insolvency, bankruptcy, or receivership, Personal Information may also be transferred as a business asset. If another company acquires our company, business, or assets, that company will possess the Personal Information collected by us and will assume the rights and obligations regarding your Personal Information as described in this Privacy Policy.

- Verve may disclose Non-Personal Information to business partners, such as analytics companies and to advertisers, ad networks, other partners, and third parties.

To opt-out of sharing of Personal Information by Verve with additional parties, you can use the “Limit Ad Tracking” settings on your device.

Verve may disclose information that is anonymous, de-identified, or aggregated for any purpose.

Security

Verve takes reasonable and appropriate technical and organizational measures to protect Personal Information from loss, misuse and unauthorized access, disclosure, alteration, and destruction, taking into due account the risks involved in the processing and the nature of the personal data.

Changes to Privacy Policy

Verve is committed to continually examining and reviewing its privacy practices and may make changes to this Privacy Policy.

Your Rights & Contact Information

Subject to local law, you may have certain rights over your Personal Information. These rights may include, depending on the circumstances, the rights to access your personal Information, delete personal Information collected from you, receive additional information regarding the information of yours we hold, stop certain disclosure of your Personal Information, withdraw consent for processing you may have given to us. If you would like to exercise your right under local law or if you have any questions or concerns about our privacy practices, you may contact us at: moc.e1714134875vrev@1714134875ycavi1714134875rp1714134875 or using the opt-out mechanisms here and here.

Verve Group, Inc. & VGI CTV Inc.

350 Fifth Avenue

Suite 7700

New York, NY 10118

California residents should consult the “Additional Information for California Residents” and residents of the EEA and United Kingdom should consult “Additional Information for European Residents”.

Last updated: January 1, 2023

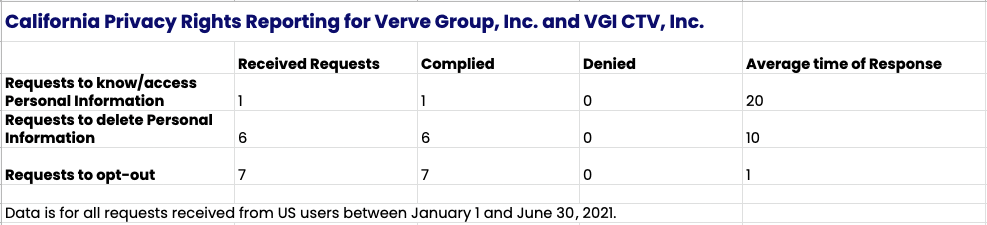

Additional Information for California Residents

Personal Data

In the course of providing the Services, Verve processes Personal Information as a “business” under the California Consumer Privacy Act (“CCPA”). As a business, we do not sell Personal Information that we process when providing the Services. We disclose the following categories of Personal Information to business partners for the purpose of providing our Services:

Categories we collect: Mobile advertising identifiers, session and persistent cookies, IP addresses, apps used or downloaded, web sites visited, browser and operating system type, type of device used, information actively provided by users responding to a form in an ad, randomly assigned identifiers, precise and general location information and system information that our SDK may collect about your device or network provider. This information is retained for a period of 14-30 days.

Categories of Sensitive Information we collect: Precise location information. We acquire location information when you provide it to an app which shares this information with us.

Sources: All the information above is collected from your device when you are using an app to visit a website and is provided to us from the business partner you interact with by using their app or viewing an ad. We also acquire this information from advertising or data exchanges.

Each Category is collected for the following business purposes: delivering ads on publisher sites, reporting on ad delivery, securing, protecting, auditing, bug and fraud detection, debugging and repair of errors and the detection, protection and prosecution of security incidents or illegal activity; enforcing our terms and policies; complying with law.

Each Category is also collected for uses that advance our commercial interests including creating profiles to target ads on behalf of advertisers, using data collected from publishers and from other third parties.

Sale/Sharing Information

Verve may disclose the categories of information described above to business partners, such as analytics companies and may share the information with advertisers, ad networks, other partners, and third parties.

Opt-Out signals

If a Verve publisher or advertiser partner has transmitted to Verve a “Do Not Sell or Share My Personal Information” signal, an “Opt-Out of the Use of My Personal Information for Interest-Based Advertising” signal, or a “Limit Use of My Sensitive Personal Information” signal, we will limit our use of Personal Information from that partner and we will act only as a service provider as defined in the CCPA. You may also exercise your opt-out rights as described below.

Access, Deletion and Opt-Out Rights

Subject to certain exceptions and restrictions, the CCPA provides you as a California Resident the right to submit requests to Verve: (i) to provide you with access to the specific pieces and categories of Personal Information collected by Verve about you, the categories of sources for such Personal Information, the business or commercial purposes for collecting such Personal Information, and the categories of third parties with which such information was shared; and (ii) to delete such Personal Information.

Please email us at moc.e1714134875vrev@1714134875apcc1714134875 for instructions for how you can exercise your California access, deletion and Opt-Out rights. We will need to verify your identity and that the information you requested relates to you before completing your rights request. We generally do not associate Personal Information (such as device history) with identified individuals as we generally do not have information such as names or email addresses connected to device information. Therefore we will need your Advertiser Identifier to process your request. If you do not provide us with the required information, we will be unable to process your request.

You may designate an authorized agent to make California Requests on your behalf.

We do not discriminate against you.

You also have the right to not be discriminated against (as provided for in applicable law) for exercising certain of your rights. Verve does not discriminate against California Residents for exercising their rights.

We do not sell the personal information of minors under 16 years of age without affirmative authorization.

Additional Information for California Residents last updated: March 30, 2021

Additional Information for European Residents

If you are situated in the European Union, the European Economic Area (“EEA”) or the United Kingdom, the following provisions will apply in addition to the Advertising and Data Services Privacy Policy to any Personal Information.

Data Controller

For Purposes of the European Union’s General Data Protection Regulation (“GDPR”), Verve is the data controller of the Personal Information.

Legal Basis for Processing

We will process your Personal Information (a) for the purpose of legitimate interests pursued by us in accordance with Art 6 para. 1 lit. (f) GDPR; or (b) because you have consented to the processing in accordance with Art. 6 para. 1 lit (a) GDPR.

We will only process Personal Information based on our legitimate interests for storing and accessing Personal Information necessary to provide the Services; selecting, delivering and reporting on the effectiveness of advertisements; measuring the performance of advertisements and campaigns and investigating and preventing fraud.

Transferring your Information outside Europe

We transfer Personal Information from the European Union, the EEA and the United Kingdom, to other countries, some of which have not yet been determined by the European Commission to have an adequate level of data protection. For example, their laws may not guarantee you the same rights, or there may not be a privacy supervisory authority there that is capable of addressing your complaints. When we engage in such transfers, we use a variety of legal mechanisms, including contracts, to help ensure your rights and protection of the Personal Information.

If we transfer your personal information to a third party service provider outside the European Union, the EEA or the United Kingdom we are responsible for such transfer and we will impose obligations on the recipients of that Personal Information to protect your data to the standard required in the GDPR.

Rights under the GDPR

You have the following rights: (a) you have the right to obtain information about the data processed about you (Art. 15 GDPR); (b) if inaccurate personal data is processed, you have a right to rectification (Art. 16 GDPR); (c) if the legal requirements are met, you may request the erasion or restriction of processing as well as object to processing (Art. 17, 18, 21 GDPR); (d) if you have consented to the data processing or a contract for data processing exists and the data processing is carried out with the help of automated procedures, you may have a right to data portability (Art. 20 GDPR).

We generally do not associate Personal Information (such as device history) with identified individuals as we generally do not have information such as names or email addresses connected to device information. Therefore, we will need your Advertiser Identifier to fulfill your rights. If you do not provide us with the required information, we will be unable to ensure your rights. To exercise your rights, for questions or further information please contact us at moc.e1714134875vrev@1714134875ycavi1714134875rp1714134875.

Additional Information for European Residents last updated: March 30, 2021

Our Data Protection Officer:

Fieldfisher Tech Rechtsanwaltsgesellschaft mbH

Amerigo-Vespucci-Platz 1

20457 Hamburg, Germany